Discovery Project – Ghola

Dune-Inspired Local AI Assistant

Overview

Ghola is a privacy-first, local voice assistant inspired by the Dune universe. Running entirely on macOS, it demonstrates a complete AI pipeline without any cloud dependencies—speech recognition, language understanding, and voice synthesis all happen on-device.

The assistant features a "Ghola" persona with Mentat-like analytical capabilities and philosophical depth from Frank Herbert's series. It responds to voice commands, controls smart home devices, and engages in conversation with a unique Dune-inspired personality.

Tech Stack

- Speech Recognition: Faster Whisper (base.en model, CPU-optimized)

- LLM: Ollama + Llama 3 (8B parameters, local inference)

- Text-to-Speech: Piper TTS (en_US-lessac-medium)

- Wake Word: openWakeWord (ONNX-based detection)

- Smart Home: Home Assistant + Matter Protocol (Docker)

- Audio I/O: sounddevice, soundfile, numpy

How It Works

The system follows a continuous voice loop:

- Wake Word Detection: Always-listening for "Hey Mycroft" or "Hey Jarvis"

- Audio Capture: Records 5 seconds of audio from microphone

- Transcription: Faster Whisper converts speech to text locally

- Intent Parsing: Simple keyword matching for lamp commands ("turn on/off the light")

- Action: Either controls mock lamp via HTTP or queries Ollama LLM

- Response: Piper TTS speaks the response with natural voice

Technical Highlights

Privacy-First Architecture

Every component runs locally—no cloud API calls, no telemetry, no data leaving the machine. This was a core design principle, proving that powerful AI assistants don't need to harvest user data.

Performance Optimizations

- CPU-optimized Whisper with int8 quantization for sub-500ms transcription

- Efficient audio chunking (80ms buffers) for real-time wake word detection

- Custom system prompt engineering to keep LLM responses concise

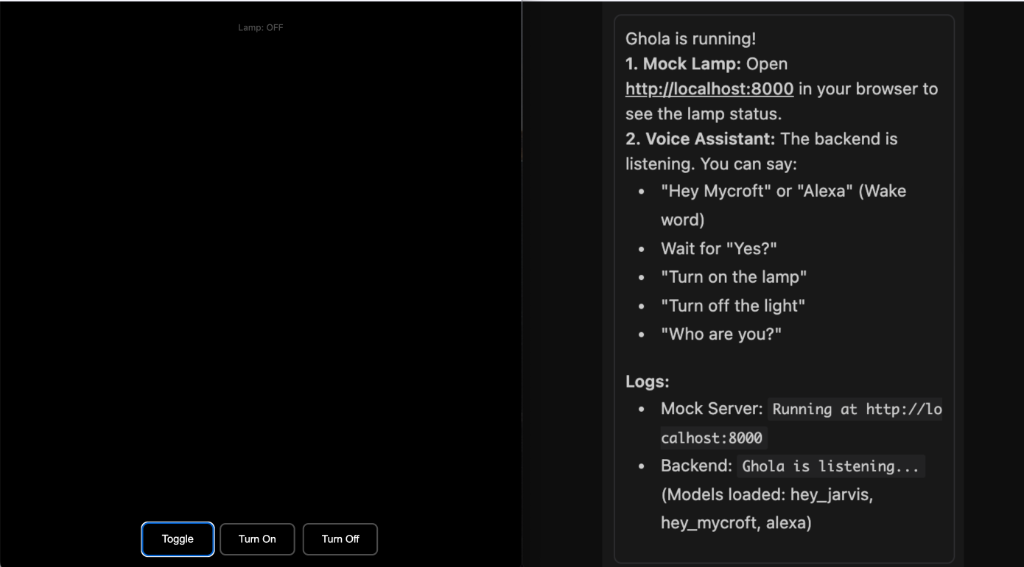

Mock Lamp System

Since Matter lamp pairing proved challenging, I built a mock lamp for testing—a web-based visual indicator (white = on, black = off) with a REST API. This allowed me to validate the full voice control loop while debugging hardware integration separately.

Example Interaction

User: "Hey Mycroft"

Ghola: "Yes?"

User: "Turn on the lamp"

Ghola: "It is done. The light shines."

What I Learned

- Systems Integration: Wiring together ASR, LLM, and TTS into a cohesive pipeline

- Audio Processing: Managing audio streams, buffers, and device I/O in Python

- Local AI Deployment: Optimizing models for consumer hardware constraints

- Smart Home Protocols: Understanding why Matter/Home Assistant interoperability is hard

- Debugging: Extensive troubleshooting of library versions and environment configs

Reflection

This project clarified my interests within ECE: while I find hardware fascinating, I consistently enjoyed the software integration work more than wrestling with smart home firmware quirks. Ghola pushed me toward a stronger focus on intelligent tooling, system architecture, and software-heavy ECE rather than purely hardware-centric paths.

"The mystery of life isn't a problem to solve, but a reality to experience."

— Frank Herbert, Dune